Chapter 9 Performance Measures

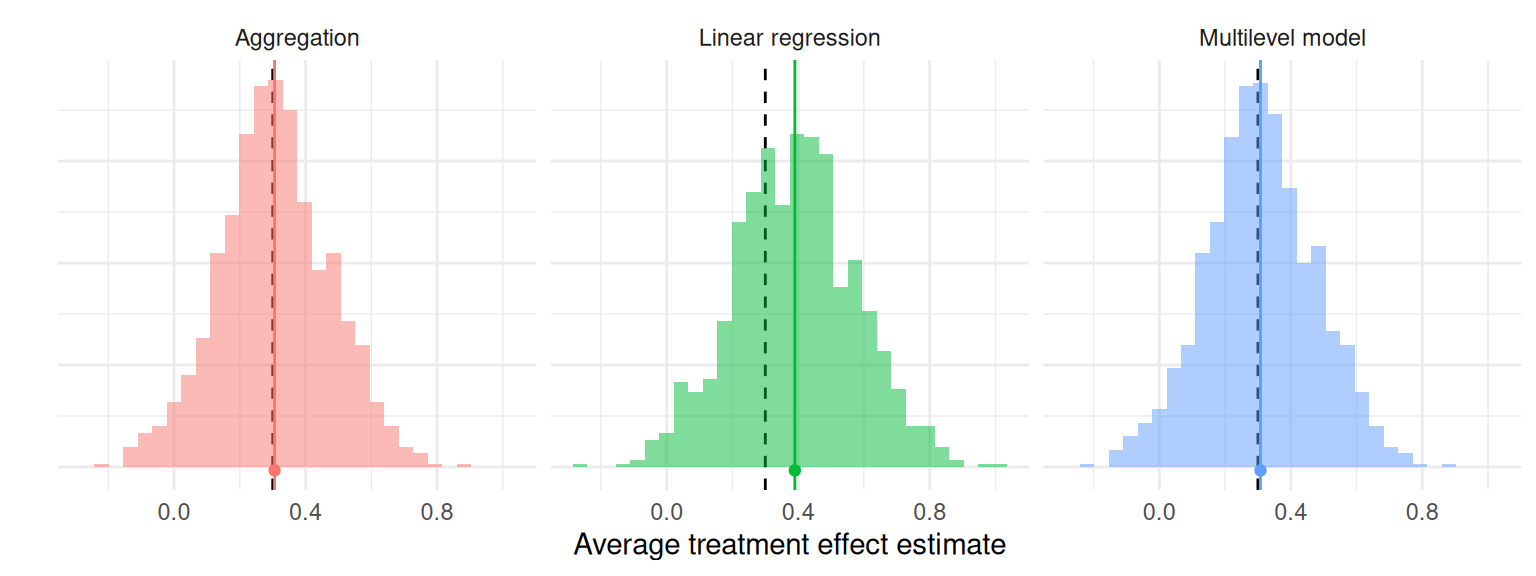

Once we run a simulation, we end up with a pile of results to sort through. For example, Figure 9.1 depicts the distribution of average treatment effect estimates from the cluster-randomized experiment simulation we generated in Section 8.3. There are three different estimators, each with 1000 replications. Each histogram is an approximation of the sampling distribution of the estimator, meaning its distribution across repetitions of the data-generating process. Visually, we see the three estimators look about the same—they sometimes estimate too low, and sometimes estimate too high. We also see linear regression is shifted up a bit, and aggregation and MLM might have a few more outlying values. These observations are initial impressions of how well each estimator performs—that is, how closely the estimator approximates the truth. In this chapter, we look at how to more precisely quantify specific aspects of estimator performance and how to compare the performance of different estimators, using what we will call performance measures.

Figure 9.1: Sampling distribution of average treatment effect estimates from a cluster-randomized trial with a true average treatment effect of 0.3 (dashed line).

A performance measure summarizes an estimator’s sampling distribution to describe how an estimator would behave on average if we were to repeat the data-generating process an infinite number of times. For example, the bias of an estimator is the difference between the average value of the estimator and the corresponding target parameter. Bias measures the central tendency of the sampling distribution, capturing whether we are systematically off, on average, from the true parameter value.

In Figure 9.1, the black dashed lines mark the true average treatment effect of 0.3 and the solid lines with the dot mark the means of the estimators. The horizontal distance between the solid and dashed lines corresponds to the bias of the estimator. This distance is nearly zero for the aggregation estimator and the multilevel model estimator, but larger for the linear regression estimator. Our performance measure of bias would be near zero for aggregation and multilevel modeling, but around 0.05 to 0.1 for linear regression.

Many performance measures exist. For point estimates, conventional performance measures include bias, variance, and root mean squared error. If the point estimates come with corresponding standard errors, then we may also want to evaluate how accurately the standard errors represent the true uncertainty of the point estimators; conventional performance measures for capturing this include the relative bias and relative root mean squared error of the variance estimator. For procedures that produce confidence intervals or other types of interval estimates, conventional performance measures include the coverage rate and average interval width. Finally, for inferential procedures that involve hypothesis tests (or more generally, classification tasks), conventional performance measures include Type I error rates and power. We describe each of these measures in Sections 9.1 through 9.4.

For any particular measure, the actual performance of an estimator depends on the data generating process under which it is evaluated. Performance measures are defined with respect to the sampling distribution of an estimator, which is the distribution of values we would see across different datasets that all came from the same DGP. We will therefore need to use some statistical notation to define properties of this sampling distribution. In the following, we define many of the most common measures in terms of standard statistical quantities such as the mean, variance, and other moments of the sampling distribution. Notation-wise, for a random variable \(T\) (e.g., for some estimator \(T\)), we will use the expectation operator \(\E(T)\) to denote the mean, \(\M(T)\) to denote the median, \(\Var(T)\) to denote the variance, and \(\Prob()\) to denote probabilities of specific outcomes, all with respect to the sampling distribution of \(T\). We also use \(\Q_p(T)\) to denote the \(p^{th}\) quantile of a distribution, which is the value \(x\) such that \(\Prob(T \leq x) = p\). With this notation, the median of a continuous distribution is equivalent to the 0.5 quantile: \(\M(T) = \Q_{0.5}(T)\). Using this notation we can write, for example, that the bias of \(T\) is \(\E(T) - \theta\), where \(\theta\) is the target parameter.

For some simple combinations of data-generating processes and data analysis procedures, it may be possible to derive exact mathematical formulas for some performance measures (such as exact mathematical expressions for the bias and variance of the linear regression estimator). But for many problems, the math is difficult or intractable—which is partly why we do simulations in the first place. However, simulations do not produce the exact sampling distribution or give us exact values of performance measures. Instead, simulations generate samples (usually large samples) from the the sampling distribution, and we can use these to estimate the performance measures of interest. In Figure 9.1, for example, we estimated the bias of each estimator by taking the mean of 1000 observations from its sampling distribution. If we were to repeat the whole simulation (with a different seed), then our bias results would shift slightly because they are imperfect estimates of the actual bias.

In working with simulation results, it is important to track how much uncertainty we have in our performance measure estimates. We call such uncertainty Monte Carlo error because it is the error arising from using a finite number of replications of the Monte Carlo simulation process. One way to quantify the simulation uncertainty is with the Monte Carlo Standard Error (MCSE), which is the standard error of a performance estimate given a specific number of replications. Just as when we analyze real data, we can apply statistical techniques to estimate the MCSE and even to generate confidence intervals for performance measures.

The magnitude of the MCSE is driven by how many replications we use: if we only use a few, we will have noisy estimates of performance with large MCSEs; if we use millions of replications, the MCSE will usually be tiny. It is important to keep in mind that the MCSE is not measuring anything about how our estimator performs. It only describes how precisely we have estimated its performance; MCSE is an artifact of how we conducted the simulation. The MCSE are also under our control: Given a desired MCSE, we can determine how many replications we would need to ensure our performance estimates have a MCSE of that size. Section 9.7 provides details about how to compute MCSEs for conventional performance measures, along with some discussion of general techniques for computing MCSE for less conventional measures.

9.1 Measures for Point Estimators

The most common performance measures used to assess a point estimator are bias, variance, and root mean squared error.

Bias compares the mean of the sampling distribution to the target parameter. Positive bias implies that the estimator tends to systematically over-state the quantity of interest, while negative bias implies that it systematically under-shoots the quantity of interest. If bias is zero (or nearly zero), we say that the estimator is unbiased (or approximately unbiased).

Variance (or its square root, the true standard error) describes the spread of the sampling distribution, or the extent to which it varies around its central tendency. All else equal, we would like estimators to have low variance (which means being more precise).

Root mean squared error (RMSE) is a conventional measure of the overall accuracy of an estimator, or its average degree of error with respect to the target parameter. For absolute assessments of performance, an estimator with low bias, low variance, and thus low RMSE is desired. In making comparisons of several different estimators, one with lower RMSE is usually preferable to one with higher RMSE. If two estimators have comparable RMSE, then the estimator with lower bias would usually be preferable.

To define these quantities more precisely, consider a generic estimator \(T\) that is targeting a parameter \(\theta\). We call the target parameter the estimand. Formally, the bias, variance, and RMSE of \(T\) are then defined as \[ \begin{aligned} \Bias(T) &= \E(T) - \theta, \\ \Var(T) &= \E\left[\left(T - \E (T)\right)^2 \right], \\ \RMSE(T) &= \sqrt{\E\left[\left(T - \theta\right)^2 \right]}. \end{aligned} \tag{9.1} \] In words, bias is the average estimate minus the truth—is it systematically too high or too low, on average? The variance is the average squared deviation of the estimator from its own mean—how much does it vary around its average estimate? Is it precise? RMSE is the square root of the average squared deviation of the estimator from the truth—how far off are we, on average, from the truth? Does it predict well?

These three measures are inter-connected. In particular, RMSE is the combination of (squared) bias and variance, as in \[ \left[\RMSE(T)\right]^2 = \left[\Bias(T)\right]^2 + \Var(T). \tag{9.2} \]

When conducting a simulation, we do not compute these performance measures directly but rather must estimate them. In most cases, in running a simulation we generate a (typically large) series of \(R\) datasets, with \(\theta\) being the true target parameter in each of them. We analyze each dataset, obtaining a sample of estimates \(T_1,...,T_R\) drawn from the sampling distribution. There is nothing very surprising about how we construct estimates of the performance measures. It is just a matter of substituting sample quantities in place of the expectations and variances. Specifically, we estimate bias by taking \[ \widehat{\Bias}(T) = \bar{T} - \theta, \tag{9.3} \] where \(\bar{T}\) is the arithmetic mean of the replicates, \(\bar{T} = \frac{1}{R}\sum_{r=1}^R T_r\). We estimate variance by taking the sample variance of the replicates, as \[ S_T^2 = \frac{1}{R - 1}\sum_{r=1}^R \left(T_r - \bar{T}\right)^2. \tag{9.4} \] \(S_T\) (the square root of \(S^2_T\)) is an estimate of the true standard error, \(SE\), of \(T\), which is the standard deviation of the estimator across an infinite set of replications of the data-generating process. Generally, when people say “Standard Error” they actually mean estimated Standard Error, (\(\widehat{SE}\)), as we generally calculate in a real data analysis (where we have only a single realization of the data-generating process). It is easy to forget that this standard error is itself an estimate of a parameter—the true SE—and thus has its own uncertainty. We usually prefer to work with the true SE \(S_T\) rather than the sampling variance \(S_T^2\) because the former quantity has the same units as the target parameter.

Finally, the RMSE estimate can be calculated as \[ \widehat{\RMSE}(T) = \sqrt{\frac{1}{R} \sum_{r = 1}^R \left( T_r - \theta\right)^2 }. \tag{9.5} \] Often, people talk about the MSE (Mean Squared Error), which is just the square of RMSE. Just as the true SE is usually easier to interpret than the sampling variance, units of RMSE are easier to interpret than the units of MSE.

All of these performance measures depend on the scale of the parameter. For example, if an estimator is targeting a treatment impact in dollars, then the bias, SE, and RMSE of the estimator are all in dollars. (The variance and MSE would be in dollars squared; taking square roots puts these measures back on the more intepretable scale of dollars.)

In many simulations, the scale of the outcome is an arbitrary feature of the data-generating process, so the absolute magnitude of the performance measure is not meaningful. To ease interpretation of performance measures, it is useful to consider their magnitude relative to some reference amount. We generally recommend using the baseline level of variation in the outcome. For example, we might report a bias of \(0.2\sigma\), where \(\sigma\) is the standard deviation of the outcome in the population.

One way to ensure performance metrics are on such a standardized scale is to generate data so the outcome has unit variance (i.e., we generate outcomes in standardized units). This automatically puts the bias, true standard error, and root mean squared error on the scale of standard deviation units, which can facilitate interpretation about what constitutes a meaningfully large bias or a meaningful difference in RMSE.

Finally, a non-linear transformation of a parameter will also generally change a performance measure. For instance, suppose that \(\theta\) measures the proportion of time that something occurs. This parameter could also be given on the log-odds (logit) scale. However, because the log-odds transformation is non-linear, \[ \text{Bias}\left[\text{logit}(T)\right] \neq \text{logit}\left(\text{Bias}[T]\right), \qquad \text{RMSE}\left[\text{logit}(T)\right] \neq \text{logit}\left(\text{RMSE}[T]\right), \] and so on.

9.1.1 Comparing the Performance of the Cluster RCT Estimation Procedures

We now demonstrate the calculation of performance measures for the point estimators of average treatment effects in the cluster-RCT example. We calculate performance measures to address the following questions:

- Is the estimator systematically off? (bias)

- Is it precise? (standard error)

- Does it predict well? (RMSE)

Let us see how the three estimators compare on these measures.

Are the estimators biased?

Bias is defined with respect to a target estimand. Here we assess whether our estimates are systematically different from the \(\gamma_1\) parameter, which we defined in standardized units by setting the standard deviation of the student-level distribution of the outcome equal to one. In our simulation, we generated data with a school-level ATE parameter of 0.30 SDs.

ATE <- 0.30

runs %>%

group_by( method ) %>%

summarise(

mean_ATE_hat = mean( ATE_hat ),

bias = mean( ATE_hat ) - ATE

)## # A tibble: 3 × 3

## method mean_ATE_hat bias

## <chr> <dbl> <dbl>

## 1 Agg 0.300 -0.000166

## 2 LR 0.382 0.0824

## 3 MLM 0.312 0.0122There is no indication of major bias for aggregation or multi-level modeling. Linear regression, with a bias of about 0.08 SDs, appears much more biased than the other estimators. This is because the linear regression is targeting the person-level average treatment effect. Our data-generating process makes larger sites have larger effects, so the person-level average effect is going to be higher because those larger sites count more in the overall average. In contrast, our estimand is the school-level average treatment effect, or the simple average of each school’s true impact, which we have set to 0.30. The aggregation and multi-level modeling methods target this school-level average effect. If we had instead decided that the target estimand should be the person-level average effect, then we would find that linear regression is unbiased whereas aggregation and multi-level modeling are biased. This example illustrates how crucial it is to think carefully about the appropriate target parameter and to assess performance with respect to a well-justified and clearly articulated target.

Which method has the smallest standard error?

The standard error measures the degree of variability in a point estimator. It reflects how stable an estimator is across replications of the data-generating process. We estimate the standard error by taking the standard deviation of the set of estimates of each estimator. For purposes of interpretation, it is useful to compare the empirical standard errors to the variation in a benchmark estimator. Here, we treat the linear regression estimator as the benchmark and compute the magnitude of the empirical SEs of each method relative to the SE of the linear regression estimator:

true_SE <-

runs %>%

group_by( method ) %>%

summarise( SE = sd( ATE_hat ) ) %>%

mutate( per_SE = SE / SE[method=="LR"] )

true_SE## # A tibble: 3 × 3

## method SE per_SE

## <chr> <dbl> <dbl>

## 1 Agg 0.214 0.956

## 2 LR 0.224 1

## 3 MLM 0.213 0.953Aggregation and multi-level modeling have SEs about 4 to 5% smaller than linear regression, meaning these estimates tend to be a bit less variable across different datasets. For these data-generating conditions, aggregation and multi-level modeling are preferable to linear regression because they are more precise. In a real data analysis, these standard errors are what we would be trying to approximate with a standard error estimator.

Which method has the smallest Root Mean Squared Error?

So far linear regression is not doing well: it has more bias and a larger standard error than the other two estimators. We can assess overall accuracy by combining these two quantities with the RMSE:

runs %>%

group_by( method ) %>%

summarise(

bias = mean( ATE_hat - ATE ),

SE = sd( ATE_hat ),

RMSE = sqrt( mean( (ATE_hat - ATE)^2 ) )

) %>%

mutate(

per_RMSE = RMSE / RMSE[method=="LR"]

)## # A tibble: 3 × 5

## method bias SE RMSE per_RMSE

## <chr> <dbl> <dbl> <dbl> <dbl>

## 1 Agg -0.000166 0.214 0.214 0.897

## 2 LR 0.0824 0.224 0.238 1

## 3 MLM 0.0122 0.213 0.213 0.896We also include SE and bias for ease of reference.

RMSE takes into account both bias and variance. For aggregation and multi-level modeling, the RMSE is the same as the standard error, which makes sense because these estimators are not biased. For linear regression, the combination of bias plus increased variability yields a higher RMSE, with the standard error dominating the bias term (note how RMSE and SE are more similar than RMSE and bias). The difference between the estimators are pronounced because RMSE is the square root of the squared bias and squared standard error. Overall, aggregation and multi-level modeling have RMSEs around 10% smaller than linear regression, meaning they tend to produce estimates that are closer to the truth than linear regression.

9.1.2 Robust Performance Measures

We have introduced bias, variance and RMSE as three core measures of performance for point estimators. However, all of these measures are sensitive to outliers in the sampling distribution. Consider an estimator that generally does well, except for an occasional large mistake. Because conventional measures are based on arithmetic averages, they could indicate that the estimator performs very poorly overall, when in fact it performs very well most of the time and terribly only rarely.

If we are more curious about typical performance, then we might prefer to use other performance measures, such as the median bias and the median absolute deviation of \(T\), which are less sensitive to outliers in the sampling distribution. These are called “robust” measures because they are not strongly affect by a few extreme values. The overall approach for most robust measures is to simply chop off some percent of the most extreme values, and then look at the central tendency or spread of the remaining values. Estimating these measures will involve calculating sample quantiles of \(T_1,...,T_R\), which are functions of the sample ordered from smallest to largest.

Median bias is an alternative measure of the central tendency of a sampling distribution. Here we “chop off” essentially all the values, focusing on the middle one alone Positive median bias implies that more than 50% of the sampling distribution exceeds the quantity of interest, while negative median bias implies that more than 50% of the sampling distribution fall below the quantity of interest. Formally, \[ \text{Median-Bias}(T) = \M(T) - \theta \tag{9.6}. \] where \(\M(T)\) is the median of \(T\).

We estimate median bias with the sample median, as \[ \widehat{\text{Median-Bias}}(T) = M_T - \theta \tag{9.7} \] where, if we define \(T_{(r)}\) as the \(r^{th}\) order statistic for \(r = 1,...,R\), we have \(M_T = T_{((R+1)/2)}\) if \(R\) is odd or \(M_T = \frac{1}{2}\left(T_{(R/2)} + T_{(R/2+1)}\right)\) if \(R\) is even.

Another robust measure of central tendency uses the \(p \times 100\%\)-trimmed mean, which ignores the estimates in the lowest and highest \(p\)-quantiles of the sampling distribution. Formally, the trimmed-mean bias is \[ \text{Trimmed-Bias}(T; p) = \E\left[ T \left| \Q_{p}(T) < T < \Q_{(1 - p)}(T) \right.\right] - \theta. \tag{9.8} \] Median bias is thus a special case of trimmed mean bias, with \(p = 0.5\). To estimate the trimmed bias, we take the mean of the middle \(1 - 2p\) fraction of the distribution \[ \widehat{\text{Trimmed-Bias}}(T; p) = \tilde{T}_{\{p\}} - \theta. \tag{9.9} \] where (assuming \(pR\) is a whole number) \[ \tilde{T}_{\{p\}} = \frac{1}{(1 - 2p)R} \sum_{r=pR + 1}^{(1-p)R} T_{(r)} \] For a symmetric sampling distribution, the trimmed-mean bias will be the same as the conventional (mean) bias, but its estimator \(\tilde{T}_{\{p\}}\) will be less affected by outlying values (i.e., values of \(T\) very far from the center of the distribution) compared to \(\bar{T}\). However, if a sampling distribution is not symmetric, trimmed-mean bias will be a distinct performance measure, different from mean bias, that puts less emphasis on large errors compared to the conventional bias measure.

A further robust measure of central tendency is based on winsorizing the sampling distribution, or truncating all errors larger than a certain maximum size. A winsorized distribution amounts to arguing that you don’t care about errors beyond a certain size, so anything beyond a certain threshold will be treated the same as if it were exactly on the threshold. The threshold for truncation is often defined relative to the first and third quartiles of the sampling distribution, along with a given span of the inter-quartile range. In this case, the thresholds for truncation would be \[ \begin{aligned} L_w &= \Q_{0.25}(T) - w \times (\Q_{0.75}(T) - \Q_{0.25}(T)) \\ U_w &= \Q_{0.75}(T) + w \times (\Q_{0.75}(T) - \Q_{0.25}(T)), \end{aligned} \] where \(\Q_{0.25}(T)\) and \(\Q_{0.75}(T)\) are the first and third quartiles of the distribution of \(T\), respectively, and \(w\) is the number of inter-quartile ranges below which an observation will be treated as an outlier.13 Once we have calculated the thresholds, we compute a truncated estimate as \(X = \min\{\max\{T, L_w\}, U_w\}\). The winsorized bias, variance, and RMSE are then defined using winsorized values in place of the raw values of \(T\), as \[ \begin{aligned} \text{Bias}(X) &= \E\left(X\right) - \theta \\ \Var\left(X\right) &= \E\left[\left(X - \E (X)\right)^2 \right], \\ \RMSE\left(X\right) &= \sqrt{\E\left[\left(X - \theta\right)^2 \right]}. \end{aligned} \tag{9.10} \] To compute estimates of the winsorized performance criteria, we substitute sample quantiles \(T_{(R/4)}\) and \(T_{(3R/4)}\) in place of \(\Q_{0.25}(T)\) and \(\Q_{0.25}(T)\), respectively, to get estimated thresholds, \(\hat{L}_w\) and \(\hat{U}_w\), find \(\hat{X}_r = \min\{\max\{T_r, \hat{L}_w\}, \hat{U}_w\}\), and compute the sample performance measures using Equations (9.3), (9.4), and (9.5), but with \(\hat{X}_r\) in place of \(T_r\).

Alternative measures of the overall accuracy of an estimator can also be defined using quantiles.

For instance, an alternative to RMSE is to use the median absolute error (MAE), defined as

\[

\text{MAE} = \M\left(\left|T - \theta\right|\right).

\tag{9.11}

\]

Letting \(E_r = |T_r - \theta|\), the MAE can be estimated by taking the sample median of \(E_1,...,E_R\).

Many other robust measures of the spread of the sampling distribution are also available, including the Rosseeuw-Croux scale estimator \(Q_n\) (Rousseeuw and Croux 1993) and the biweight midvariance (Wilcox 2022).

Maronna, Martin, and Yohai (2006) provide a useful introduction to these measures and robust statistics more broadly.

The robustbase package (Maechler et al. 2024) provides R functions for calculating many of these robust statistics.

9.2 Measures for Variance Estimators

Statistics is concerned not only with how to estimate things (e.g., point estimates), but also with understanding how well we have estimated them (e.g., standard errors). Monte Carlo simulation studies can help us evaluate both these concerns. The prior section focused on assessing how well our estimators work. In this section we focus on assessing how well our uncertainty assessments work, looking in particular on estimation of the standard error.

A first order question is whether our estimated standard errors are calibrated, meaning whether they are the right size, on average. For this question we will examine the bias of squared standard error estimators. A second order question is whether our estimated standard errors are stable, meaning that they do not vary too much from one dataset to another. For this question we evaluate estimated standard errors in terms of effective degrees of freedom or the standard error (or RMSE) of the standard error.

9.2.1 Calibration

Calibration is a relative measure, where we look at the ratio of the expected (average) value of an uncertainty estimator to the true degree of uncertainty. The relative measure removes scale, which is important because the absolute magnitude of uncertainty will depend on many features of the data-generating process, such as sample size. In particular, across different simulation scenarios (even those with the same target parameter \(\theta\)), the true standard error of an estimator \(T\) may change considerably. In other words, the ground truth changes in magnitude from one scenario to another. With a relative measure, we can make statements such as “Our standard errors are 10% too large, on average,” which are easy to interpret. The relative measures lets us sidestep some of the complexity of dealing with wide variation in the magnitude of the ground truth.

Typically, we assess performance for variance estimators (\(\widehat{SE^2}\)) rather than standard error estimators. There are a few reasons it is more common to work with variance rather than standard error. First, in practice, so-called unbiased standard errors are not actually unbiased, whereas variance estimators might be.14 For linear regression, for example, the classic standard error estimator is an unbiased variance estimator, but the standard error estimator is not exactly unbiased because \[ \E[ \sqrt{ V } ] \neq \sqrt{ \E[ V ] }. \] Variance is also the measure that gives us the bias-variance decomposition of Equation (9.2). Thus, if we are trying to determine whether an overall MSE is due to instability or systematic bias, operating in this squared space may be preferable.

To make the calibration measure concrete, consider a generic standard error estimator \(\widehat{SE}\) to go along with our generic estimator \(T\) of target parameter \(\theta\), and let \(V = \widehat{SE^2}\) be the corresponding variance estimator.

In our simulation we obtain a large sample of standard errors, \(\widehat{SE}_1,...,\widehat{SE}_R\) and corresponding variance estimators \(V_r = \widehat{SE}_r^2\) for \(r = 1,...,R\). Formally, the relative bias or calibration of \(V\) is then defined as \[ \begin{aligned} \text{Relative Bias}(V) &= \frac{\E(V)}{\Var(T)} \\ \end{aligned} \tag{9.12} \] Relative bias describes whether the central tendency of \(V\) aligns with the actual degree of uncertainty in the point estimator \(T\). We say our variance estimator \(V\) is calibrated if the relative bias is close to one. When calibrated, \(V\) reflects how much uncertainty there actually is, on average. If relative bias is less than one, \(V\) is anti-conservative, meaning it tends to under-estimate the true uncertainty of \(T\), which would usually be considered quite bad. If relative bias is more than one, \(V\) is conservative, meaning it tends to over-estimate the true uncertainty of \(T\). This would be considered less than ideal, though perhaps not as severe an issue.

Calibration also has implications for the performance of other uncertainty assessments such as hypothesis tests and confidence intervals. A relative bias of less than 1 implies that \(V\) tends to under-state the amount of uncertainty, which will lead to confidence intervals that are overly narrow and do not cover the true parameter value at the desired rate. It also will lead to rejecting the null more often than desired, which elevates Type I error. Relative bias greater than 1 implies that \(V\) tends to over-state the amount of uncertainty in the point estimator, making confidence intervals too large and null hypotheses harder than necessary to reject.

To estimate relative bias of \(V\), we simply substitute sample quantities in place of the \(\E(V)\) and \(\Var(T)\). Unlike with performance measures for \(T\), where we know the target quantity \(\theta\), here we do not know \(\Var(T)\) exactly. Instead, we must estimate it using \(S_T^2\), the sample variance of \(T\) across replications. Denoting the arithmetic mean of the variance estimates across simulation trials as \[ \bar{V} = \frac{1}{R} \sum_{r=1}^R V_r \] we estimate the relative bias (calibration) of \(V\) using \[ \begin{aligned} \widehat{\text{Relative Bias}}(V) &= \frac{\bar{V}}{S_T^2} . \\ \end{aligned} \tag{9.13} \] If we then take the square root of our estimate, we can obtain the estimated relative bias of the standard error.

9.2.2 Stability

Beyond calibration, we also care about the stability of our variance estimator. Are the estimates of uncertainty \(V\) close to the true uncertainty \(Var(T)\), from dataset to dataset?

We offer two ways of assessing this. The first is to look at the relative SE or RMSE of the variance estimator \(V\). The second is to assess the effective degrees of freedom of \(V\), which takes into account how one would account for the uncertainty of a standard error estimator when generating confidence intervals or conducting hypothesis tests.15

The relative Variance and MSE of \(V\) as an estimate of \(Var(T)\) are defined as \[ \begin{aligned} \text{Relative Var}(V) &= \frac{\Var(V)}{\Var(T)} \\ \text{Relative MSE}(V) &= \frac{\E\left[\left(V - \Var(T)\right)^2 \right]}{\Var(T)}. \end{aligned} \] As usual, we would generally work with the the square root of these values (to get relative SE and relative RMSE). Relative standard errors describe the variability of \(V\) in comparison to the true degree of uncertainty in \(T\)—the lower the better. A relative standard error of 0.5 would mean that the variance estimator has average error of 50% of the true uncertainty, implying that \(V\) could often be off by a factor of 2—this would be very bad. Ideally, a variance estimator will have small relative bias (i.e., it is calibrated), small relative standard error (i.e., it is stable), and thus a small relative RMSE.

We can estimate the relative variance and MSE simply by plugging in estimates of the various quantities: \[ \begin{aligned} \widehat{\text{Relative Var}}(V) &= \frac{S^2_V}{S_T^2} \\ \widehat{\text{Relative MSE}}(V) &= \frac{\frac{1}{R}\sum_{r=1}^R\left(V_r - S_T^2\right)^2}{S_T^2}. \end{aligned} \tag{9.14} \] where the sample variance of \(V\) is \[ S_V^2 = \frac{1}{R - 1}\sum_{r=1}^R \left(V_r - \bar{V}\right)^2, \]

Another more abstract measure of the stability of a variance estimator is its effective degrees of freedom. For some simple statistical models such as classical analysis of variance and linear regression with homoskedastic errors, the variance estimator is a sum of squares of normally distributed errors. In such cases, the sampling distribution of the variance estimator is a multiple of a \(\chi^2\) distribution, with degrees of freedom corresponding to the number of independent observations used to compute the sum of squares. This is what leads to \(t\) statistics and so forth.

In the context of analysis of variance problems, Satterthwaite (1946) described a method of extending this idea by approximating the variability of more complex statistics (those that can be represented as a linear combination of a sum of squares), by using a chi-squared distribution with a certain degrees of freedom. When applied to an arbitrary variance estimator \(V\), these degrees of freedom can be interpreted as the number of independent, normally distributed errors going into a sum of squares that would lead to a variance estimator that is as equally precise as \(V\). More succinctly, these effective degrees of freedom correspond to the amount of independent observations used to estimate \(V\).

Following Satterthwaite (1946), we define the effective degrees of freedom of \(V\) as \[ df = \frac{2 \left[\E(V)\right]^2}{\Var(V)}. \tag{9.15} \] We can estimate the degrees of freedom by taking \[ \widehat{df} = \frac{2 \bar{V}^2}{S_V^2}. \tag{9.16} \] For simple statistical methods in which \(V\) is based on a sum-of-squares of normally distributed errors, the effective degrees of freedom will be constant and correspond exactly to the number of independent observations in the sum of squares. Even with more complex methods, the degrees of freedom are interpretable: higher degrees of freedom imply that \(V\) is based on more observations, and thus will be a more precise estimate of the actual degree of uncertainty in \(T\).

9.2.3 Assessing SEs for the Cluster RCT Simulation

Returning to the cluster RCT example, we will assess whether our estimated SEs are about right by comparing the average estimated (squared) standard error to the empirical sampling variance. Our standard errors are inflated if they are systematically larger than they should be, across the simulation runs. We will also look at how stable our variance estimates are by comparing their standard deviation to the empirical sampling variance and by computing the Satterthwaite degrees of freedom.

SE_performance <-

runs %>%

mutate( V = SE_hat^2 ) %>%

group_by( method ) %>%

summarise(

SE_sq = var( ATE_hat ),

V_bar = mean( V ),

calib = V_bar / SE_sq,

S_V = sd( V ),

rel_SE_V = S_V / SE_sq,

df = 2 * mean( V )^2 / var( V )

)

SE_performance %>%

knitr::kable( digits = c(0,3,3,2,3,2,1) )| method | SE_sq | V_bar | calib | S_V | rel_SE_V | df |

|---|---|---|---|---|---|---|

| Agg | 0.046 | 0.051 | 1.13 | 0.018 | 0.38 | 17.2 |

| LR | 0.050 | 0.055 | 1.11 | 0.023 | 0.46 | 11.5 |

| MLM | 0.045 | 0.051 | 1.12 | 0.017 | 0.38 | 17.3 |

The variance estimators all appear to be a bit conservative on average, with relative bias of around 1.12, or about 12% higher than the true sampling variance.

The column labelled rel_SE_V reports how variable the variance estimators are relative to the true sampling variances of the estimators.

The column labelled df reports the Satterthwaite degrees of freedom of each variance estimator.

Both of these measures indicate that the linear regression variance estimator is less stable than the other methods, with around 6 fewer degrees of freedom.

The linear regression method uses a cluster-robust variance estimator, which is known to be unstable with few clusters (Cameron and Miller 2015).

Overall, it is a bad day for linear regression.

9.3 Measures for Confidence Intervals

Some estimation procedures provide confidence intervals (or confidence sets) which are ranges of values that should include the true parameter value with a specified confidence level. For a 95% confidence level, the interval should include the true parameter in 95% replications of the data-generating process. However, with the exception of some simple methods and models, methods for constructing confidence intervals usually involve approximations and simplifying assumptions, so their actual coverage rate might deviate from the intended confidence level.

We typically measure confidence interval performance along two dimensions: coverage rate and expected width. Suppose that the confidence interval is for the target parameter \(\theta\) and has intended coverage level \(\beta\) for \(0 < \beta < 1\). Denote the lower and upper end-points of the \(\beta\)-level confidence interval as \(A\) and \(B\). \(A\) and \(B\) are random quantities—they will differ each time we compute the interval on a different dataset. The coverage rate of a \(\beta\)-level interval estimator is the probability that it covers the true parameter, formally defined as \[ \text{Coverage}(A,B) = \Prob(A \leq \theta \leq B). \tag{9.17} \] For a well-performing interval estimator, \(\text{Coverage}\) will be at least \(\beta\) and ideally will not exceed \(\beta\) by too much. Some analysts prefer to look at lower and upper coverage separately, where lower coverage is \(\Prob(A \leq \theta)\) and upper coverage is \(\Prob(\theta \leq B)\), and where it is desirable for each of these rates to be near \(\beta / 2\).

The expected width of a \(\beta\)-level interval estimator is the average difference between the upper and lower endpoints, formally defined as \[ \text{Width}(A,B) = \E(B - A). \tag{9.18} \] Smaller expected width means that the interval tends to be narrower, on average, and thus more informative about the value of the target parameter. Expected width is related to the average estimated standard error, but also includes how much wider we need to make our interval if we have a degree-of-freedom correction.

In practice, we approximate the coverage and width of a confidence interval by summarizing across replications of the data-generating process. Let \(A_r\) and \(B_r\) denote the lower and upper end-points of the confidence interval from simulation replication \(r\), and let \(W_r = B_r - A_r\), all for \(r = 1,...,R\). The coverage rate and expected length measures can be estimated as \[ \begin{aligned} \widehat{\text{Coverage}}(A,B) &= \frac{1}{R}\sum_{r=1}^R I(A_r \leq \theta \leq B_r) \\ \widehat{\text{Width}}(A,B) &= \frac{1}{R} \sum_{r=1}^R W_r = \frac{1}{R} \sum_{r=1}^R \left(B_r - A_r\right). \end{aligned} \tag{9.19} \] Following a strict statistical interpretation, a confidence interval performs acceptably if it has actual coverage rate greater than or equal to \(\beta\). If multiple methods satisfy this criterion, then the method with the lowest expected width would be preferable.

In many instances, confidence intervals are constructed using point estimators and uncertainty estimators. For example, a conventional Wald-type confidence interval is centered on a point estimator, with end-points taken to be a multiple of an estimated standard error below and above the point estimator: \[ A = T - c \times \widehat{SE}, \quad B = T + c \times \widehat{SE} \] for some critical value \(c\) (e.g., for a normal critical value with a \(\beta = 0.95\) confidence level, \(c = 1.96\)). Because of these connections, confidence interval coverage will often be closely related to the performance of the point estimator and variance estimator. Biased point estimators will tend to have confidence intervals with coverage below the desired level because they are not centered in the right place. Likewise, variance estimators that have relative bias below 1 will tend to produce confidence intervals that are too short, leading to coverage below the desired level. Thus, confidence interval coverage captures multiple aspects of the performance of an estimation procedure.

9.3.1 Confidence Intervals in the Cluster RCT Simulation

Returning to the CRT simulation, we will examine the coverage and expected width of normal Wald-type confidence intervals for each of the estimators under consideration.

To do this, we first have to calculate the confidence intervals because we did not do so in the estimation function.

We compute a normal critical value for a \(\beta = 0.95\) confidence level using qnorm(0.975), then compute the lower and upper end-points using the point estimators and estimated standard errors:

runs_CIs <-

runs %>%

mutate(

A = ATE_hat - qnorm(0.975) * SE_hat,

B = ATE_hat + qnorm(0.975) * SE_hat

)Now we can estimate the coverage rate and expected width of these confidence intervals:

runs_CIs %>%

group_by( method ) %>%

summarise(

coverage = mean( A <= ATE & ATE <= B ),

width = mean( B - A )

)## # A tibble: 3 × 3

## method coverage width

## <chr> <dbl> <dbl>

## 1 Agg 0.948 0.877

## 2 LR 0.92 0.903

## 3 MLM 0.945 0.872The coverage rate is close to the desired level of 0.95 for the multilevel model and aggregation estimators, but it is a bit too low for linear regression. The lower-than-nominal coverage level occurs because of the bias of the linear regression point estimator. The linear regression confidence intervals are also a bit wider than the other methods due to the larger sampling variance of its point estimator and higher variability (lower degrees of freedom) of its standard error estimator.

The normal Wald-type confidence intervals we have examined here are based on fairly rough approximations. In practice, we might want to examine more carefully constructed intervals such as ones that use critical values based on \(t\) distributions or ones constructed by profile likelihood. Especially in scenarios with a small or moderate number of clusters, such methods might provide better intervals, with coverage closer to the desired confidence level. In our earlier assessment of the variance estimator (see 9.2.3), we also found Linear Regression had lower degrees of freedom, meaning it might have notably wider intervals if they were calculated more carefully. See Exercise 9.10.4.

9.4 Measures for Inferential Procedures (Hypothesis Tests)

Hypothesis testing entails first specifying a null hypothesis, such as that there is no difference in average outcomes between two experimental groups. One then collects data and evaluates whether the observed data is compatible with the null hypothesis. Hypothesis test results are often described in terms of a \(p\)-value, which measures how extreme or surprising a feature of the observed data (a test statistic) is relative to what one would expect if the null hypothesis is true. A small \(p\)-value (such as \(p < .05\) or \(p < .01\)) indicates that the observed data would be unlikely to occur if the null is true, leading the researcher to reject the null hypothesis. Alternately, testing procedures might be formulated by comparing a test statistic to a specified critical value; a test statistic exceeding the critical value would lead the researcher to reject the null.

Hypothesis testing procedures aim to control the level of false positives, corresponding to the probability that the null hypothesis is rejected when it holds in truth. The level of a testing procedure is often denoted as \(\alpha\), and it has become conventional in many fields to conduct tests with a level of \(\alpha = .05\).16 Just as in the case of confidence intervals, hypothesis testing procedures can sometimes be developed that will have false positive rates exactly equal to the intended level \(\alpha\). However, in many other problems, hypothesis testing procedures involve approximations or simplifying assumptions, so that the actual rate of false positives might deviate from the intended \(\alpha\)-level. Knowing the risk of this is important. When we evaluate a hypothesis testing procedure, we are concerned with two primary measures of performance: validity and power.

9.4.1 Validity

Validity pertains to whether we erroneously reject a true null more often than we should. An \(\alpha\)-level testing procedure is valid if it has no more than an \(\alpha\) chance of rejecting the null, when the null is true. If we were using the conventional \(\alpha = .05\) level, then a valid testing procedure will reject the null in only about 50 of 1000 replications of a data-generating process where the null hypothesis actually holds true.

To assess validity, we will need to specify a data generating process where the null hypothesis holds (e.g., where there is no difference in average outcomes between experimental groups). We then generate a large series of data sets with a true null, conduct the testing procedure on each dataset and record the \(p\)-value or critical value, then score whether we reject the null hypothesis. In practice, we may be interested in evaluating a testing procedure by exploring data generation processes where the null is true but other aspects of the data (such as outliers, skewed outcome distributions, or small sample size) make estimation difficult, or where auxiliary assumptions of the testing procedure are violated. Examining such data-generating processes allows us to understand if our methods are robust to patterns that might be encountered in real data analysis. The key to evaluating the validity of a procedure is that, for whatever data-generating process we examine, the null hypothesis must be true.

9.4.2 Power

Power is concerned with the chance that we notice when an effect or a difference exists—that is, the probability of rejecting the null hypothesis when it does not actually hold. Compared to validity, power is a more nuanced concept because larger effects will clearly be easier to notice than smaller ones, and more blatant violations of a null hypothesis will be easier to identify than subtle ones. Furthermore, the rate at which we can detect violations of a null will depend on the \(\alpha\) level of the testing procedure. A lower \(\alpha\) level will make for a less sensitive test, requiring stronger evidence to rule out a null hypothesis. Conversely, a higher \(\alpha\) level will reject more readily, leading to higher power but at a cost of increased false positives.

In order to evaluate the power of a testing procedure by simulation, we will need to generate data where there is something to detect. In other words, we will need to ensure that the null hypothesis is violated and that some specific alternative hypothesis of interest holds. The process of evaluating the power of a testing procedure is otherwise identical to that for evaluating its validity: generate many datasets, carry out the testing procedure, and track the rate at which the null hypothesis is rejected. The only difference is the conditions under which the data are generated.

We often find it useful to think of power as a function rather than as a single quantity because its absolute magnitude will generally depend on the sample size of a dataset and the magnitude of the effect of interest. Because of this, power evaluations will typically involve examining a sequence of data-generating scenarios with varying sample size or varying effect size. If we are actually planning a future analysis, we would then look to determine what sample size or effect size would give us 80% power (a traditional target power level used for planning in many cases). See Chapter 20 for more on power analysis for study planning.

Separately, if our goal is to compare testing procedures, then absolute power at substantively large effects is often less informative than the relative power of different testing procedures at substantively small effects, near to but not exactly at the null. In this setting, attention naturally shifts to infinitesimal power—that is, how quickly power increases as we move from a null effect to very small alternatives. Examining infinitesimal power helps identify which tests are most sensitive to small departures from the null in a more standardized way.

9.4.3 Rejection Rates

When evaluating either validity or power, the main performance measure is the rejection rate of the hypothesis test. Let \(P\) be the \(p\)-value from a procedure for testing the null hypothesis that a parameter \(\theta = 0\). The rejection rate is then \[ \rho_\alpha(\theta) = \Prob(P < \alpha) \tag{9.20} \]

When data are simulated from a process in which the null hypothesis is true (i.e., \(\theta\) really does equal 0), then the rejection rate is equivalent to the Type-I error rate of the test. Ideally, a testing procedure should have actual Type-I error exactly equal to the nominal level \(\alpha\). When the data are simulated from a process in which the null hypothesis is violated (\(\theta \neq 0\)), then the rejection rate is equivalent to the power of the test (for the given scenario defined by the DGP and the alternate hypothesis specified by the value of \(\theta\)).

To estimate the rejection rate of a test, we calculate the proportion of replications where the test rejects the null hypothesis. Letting \(P_1,...,P_R\) be the \(p\)-values simulated from \(R\) replications of a data-generating process with true parameter \(\theta\), we estimate the rejection rate by calculating \[ r_\alpha(\theta) = \frac{1}{R} \sum_{r=1}^R I(P_r < \alpha). \tag{9.21} \] It may be of interest to evaluate the performance of the test at several different \(\alpha\) levels. For instance, Brown and Forsythe (1974) evaluated the Type-I error rates and power of their tests using \(\alpha = .01\), \(.05\), and \(.10\). Simulating the \(p\)-value of the test makes it easy to estimate rejection rates for multiple \(\alpha\) levels, since we simply need to apply Equation (9.21) for several values of \(\alpha\). When simulating from a data-generating process where the null hypothesis holds, one can also plot the empirical cumulative distribution function of the \(p\)-values; for an exactly valid test, the \(p\)-values should follow a standard uniform distribution with a cumulative distribution falling along the \(45^\circ\) line.

Methodologists hold a variety of perspectives on how close the actual Type-I error rate should be in order to qualify as suitable for use in practice. Following a strict statistical definition, a hypothesis testing procedure is said to be level-\(\alpha\) if its actual Type-I error rate is always less than or equal to \(\alpha\), for any specific conditions of a data-generating process. Among a collection of level-\(\alpha\) testing procedures, we would prefer the one with highest power. If looking only at null rejection rates, then the test with Type-I error closest to \(\alpha\) would usually be preferred.

9.4.4 Inference in the Cluster RCT Simulation

Returning to the cluster RCT simulation, we evaluate the validity and power of hypothesis tests for the average treatment effect based on each of the three estimation methods. The data used in previous sections of the chapter was simulated under a process with a non-null treatment effect parameter (equal to 0.3 SDs), so the null hypothesis of zero average treatment effect does not hold. Thus, the rejection rates for this scenario correspond to estimates of power. We compute the rejection rate for tests with an \(\alpha\) level of \(.05\):

## # A tibble: 3 × 2

## method power

## <chr> <dbl>

## 1 Agg 0.241

## 2 LR 0.32

## 3 MLM 0.263For this particular scenario, none of the tests have especially high power, and the linear regression estimator apparently has higher power than the aggregation method and the multi-level model.

To make sense of this power pattern, we should consider the validity of the testing procedures.

We can do so by running a new simulation using the function we wrote in Section 8.3, but with an average treatment effect of zero (i.e., we make the null hypothesis true), an elevated size_coef and alpha parameter to induce additional treatment effect heterogeneity, and a larger number of clusters to get more stable estimation:

set.seed( 4404044 )

runs_val <- run_CRT_sim( reps = 1000,

n_bar = 30, J = 30,

ATE = 0, ICC = 0.2,

size_coef = 0.75, alpha = 0.75 )Assessing validity involves repeating the exact same rejection rate calculations as we did for power. We also estimate the bias of the point estimator, since bias can affect the validity of a testing procedure:

runs_val %>%

group_by( method ) %>%

summarise(

bias = mean(ATE_hat),

power = mean( p_value <= 0.05 )

)## # A tibble: 3 × 3

## method bias power

## <chr> <dbl> <dbl>

## 1 Agg -0.00147 0.06

## 2 LR 0.129 0.104

## 3 MLM 0.0170 0.065The aggregation and multi-level modeling approaches both seem to reject a bit below 0.05, at 0.04, while LR is here at 0.10. The test for the linear regression estimator has an elevated Type-I error, probably driven by the upward bias of the point estimator used in constructing the test (see the bias column). The elevated rejection rate due to bias might then be part of the reason that the linear regression had higher power than the other procedures in our power example, above. If so, it is not entirely fair to compare the power of the three testing procedures, because one of them has Type-I error in excess of the desired level.

As discussed above, linear regression targets the person-level average treatment effect.

In the scenario we just simulated for evaluating validity, the person-level average effect is not zero even though our cluster-average effect is zero, because we have specified a non-zero impact heterogeneity parameter (size_coef=0.75) along with positive school size variation (alpha = 0.75), so that the school-specific treatment effects vary around 0.

To see if this is why the linear regression test has an inflated Type-I error rate, we could re-run the simulation using settings where both the school-level and person-level average effects are truly zero by setting size_coef = 0.

9.5 Relative or Absolute Measures?

A relative measure is when you compare a performance measure to the target quantity by taking a ratio. For example, relative bias is the expected value of an estimator divided by the true parameter value. As we saw when evaluating variance, above, relative measures can be very powerful, giving statements such as “this estimator’s standard errors are 10% too big, on average.” In the best case, they are easy to interpret because they are unitless. However, they also suffer from some notable drawbacks.

Relative measures can be difficult to interpret under several circumstances. For one, ratios can be deceiving when the denominator is near zero. This can be a problem when, for example, examining bias relative to a benchmark estimator. They can also be problematic when the denominator, i.e. reference value, can take on either negative or positive values. Finally, if the reference value changes unexpectedly with changes in the simulation context, then relative measures can be misleading.

We now discuss these issues in more detail and offer guidance on how to decide whether to to use relative or absolute performance measures.

9.5.1 Performance relative to the target parameter

In considering performance measures for point estimators, we have defined the measures in terms of differences (bias, median bias) and average deviations (variance and RMSE), all of which are on the scale of the target parameter. In contrast, for evaluating estimated standard errors we have defined measures in relative terms, calculated as ratios of the average estimate to the target quantity, rather than as differences. In the latter case, relative measures are justified because the target quantity (the true degree of uncertainty) is always positive and is usually strongly affected by design parameters of the data-generating process. Is it ever reasonable to use relative measures for point estimators? If so, how should we decide whether to use relative or absolute measures?

Many published simulation studies do use relative performance measures for evaluating point estimators. For instance, studies might use relative bias or relative RMSE, defined as \[ \begin{aligned} \text{Relative }\Bias(T) &= \frac{\E(T)}{\theta}, \\ \text{Relative }\RMSE(T) &= \frac{\sqrt{\E\left[\left(T - \theta\right)^2 \right]}}{\theta}. \end{aligned} \tag{9.22} \] and estimated as \[ \begin{aligned} \widehat{\text{Relative }\Bias(T)} &= \frac{\bar{T}}{\theta}, \\ \widehat{\text{Relative }\RMSE(T)} &= \frac{\widehat{RMSE}(T)}{\theta}. \end{aligned} \tag{9.23} \] As justification for evaluating bias in relative terms, authors often appeal to Hoogland and Boomsa (1998), who suggested that relative bias of under 5% (i.e., relative bias falling between 0.95 and 1.05) could be considered acceptable for an estimation procedure. However, Hoogland and Boomsa (1998) were writing about a very specific context—robustness studies of structural equation modeling techniques—that have parameters of a particular form. In our view, their proposed rule-of-thumb is often generalized far beyond the circumstances where it is defensible, including to problems where it is clearly arbitrary and inappropriate.

One big problem with relative bias, in particular, arises when the target parameter is equal to or near zero. Suppose you have a bias of 0.1 that is consistent across values of the true parameter. For a true parameter value of 0.2, the relative bias would be 0.5. But if the true value were 0.01, then the relative bias would be 10! As the true value gets smaller and smaller, the relative bias will explode.

Another problem with relative bias occurs when the target parameter is on an arbitrary scale, so that the absolute magnitude is not meaningful. For example, in our Cluster RCT simulation, the relative bias of the ATE estimators would change if we moved the ATE from 0.2 to 0.5, or from 0.2 to 0 (where relative bias would now be entirely undefined). In contrast, the absolute bias would stay the same regardless of the ATE.

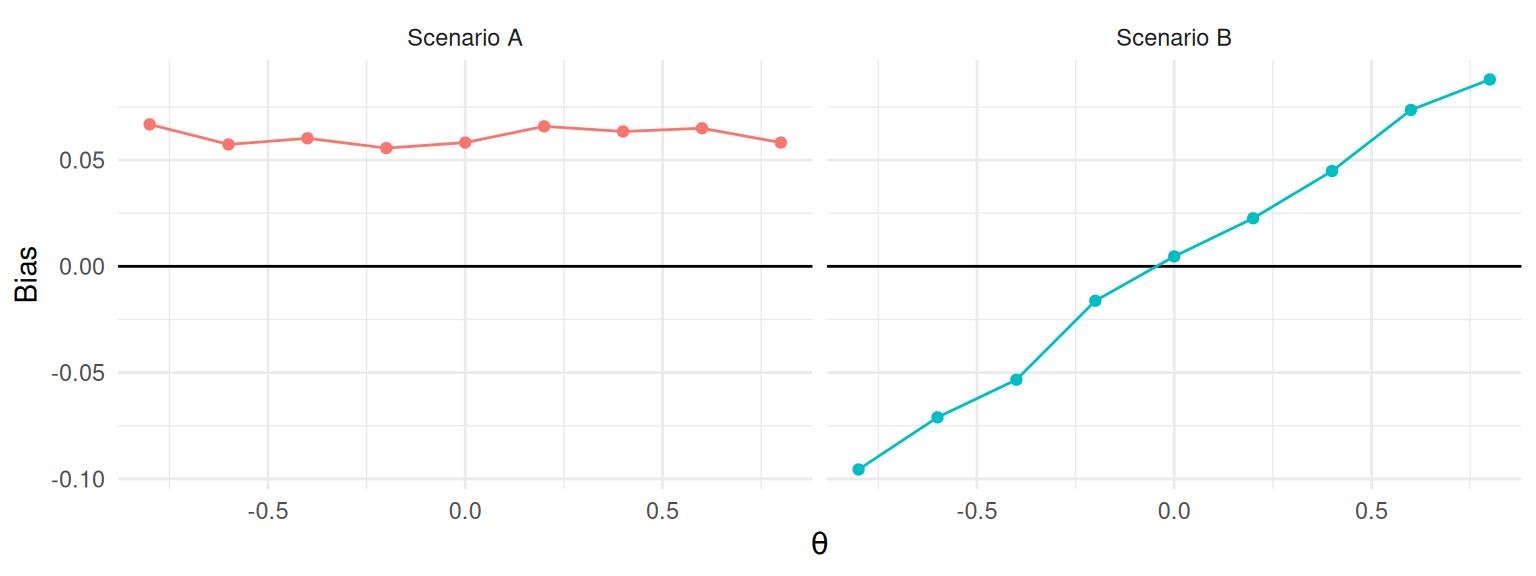

A more principled approach to choosing between whether to use absolute and relative measures is to first consider whether the magnitude of the measure changes across different values of the target parameter \(\theta\). If the estimand of interest is a location parameter, then the bias, variance or RMSE of an estimator will likely not change as \(\theta\) changes (see Figure 9.2, left). Focusing on relative measures in this scenario would lead to a much more complicated story because different values of \(\theta\) will produce drastically different values, ranging from nearly unbiased to nearly infinite bias (for \(\theta\) very close to zero). Better to use absolute bias, which is nearly constant and simpler to interpret and explain. On the other hand, if changing \(\theta\) would lead to proportionate changes in the magnitude of bias, variance, or RMSE, then relative bias may be useful because it could lead to a simple story such as “the estimator is usually estimating around 12% too high,” or similar. This is what is shown at right of Figure 9.2. We usually expect this type of pattern to occur for scale parameters, such as standard deviations or standard errors (as we considered in 9.2).

Figure 9.2: Hypothetical relationships between bias and a target parameter \(\theta\). In Scenario A, bias is unrelated to \(\theta\) and absolute bias is a more appropriate measure. In Scenario B, bias is proportional to \(\theta\) and relative bias is a more appropriate measure.

How do we know which of these scenarios is a better match for a particular problem? For some estimators and data-generating processes, it may be possible to use statistical theory to examine the how bias or variance would be expected to change as a function of \(\theta\). However, many problems are too complex to be tractable. A generally more feasible route is to evaluate performance for multiple values of the target parameter and then generate a graph such as shown in Figure 9.2 to understand how performance changes as a function of the target parameter. If the absolute bias is roughly the same for all values of \(\theta\) (as in Scenario A), then use absolute bias. On the other hand, if the bias grows roughly in proportion to \(\theta\) (as in Scenario B), then relative bias might be a better summary criterion. Generally, unless you believe bias must be zero if the parameter \(\theta\) is zero, absolute bias is usually the safer choice.

9.5.2 Performance relative to a benchmark estimator

Another way to define performance measures in relative terms is to take the ratio of the performance measure for one estimator over the performance measure for a benchmark estimator (or some other benchmark quantity). We have already demonstrated this approach in comparing standard errors and RMSEs for the cluster RCT example (Section 9.1.1), where we used the linear regression estimator as the benchmark. This approach is natural in simulations that involve comparing the performance of multiple estimators and where one of the estimators could be considered the current standard or conventional method.

Comparing the performance of one estimator relative to another can be especially useful when examining measures whose magnitude varies drastically across design parameters. For example, we typically expect precision and accuracy to improve (variance and RMSE to decrease) as sample size increases. Comparing estimators in terms of relative precision or relative accuracy may then make it easier to identify consistent patterns in the simulation results. A relative measure might allow us to summarize findings by saying that “the aggregation estimator has standard errors that are consistently 6-10% smaller than the standard errors of the linear regression estimator.” This is much easier to interpret than saying that “aggregation has standard errors that are around 0.01 smaller than linear regression, on average.” In the latter case, it is very difficult to determine whether a difference of 0.01 is large or small. Furthermore, focusing on an average difference conceals relevant variation across scenarios involving different sample sizes.

Comparing performance relative to a benchmark method can be an effective tool, but it also has potential drawbacks. Because these relative performance measures are inherently comparative, higher or lower ratios could either be due to the behavior of the method of interest (the numerator) or due to the behavior of the benchmark method (the denominator). Consider if the benchmark completely falls apart under some circumstance, but the comparison only worsens a slight bit. The relative measure, focused on the comparison, would appear to be a marked improvement, which could be deceptive. Ratio comparisons are also less effective for performance measures, such as power, that are on a constrained scale. If we have a power of 0.05, and we improve it to 0.10, we have doubled our power, but if it is 0.50 and we increase to 0.55, we have only increased by 10%. Whether this is misleading or not will depend on context.

9.6 Estimands Not Represented By a Parameter

In the Cluster RCT example, we focused on the estimand of the school-level ATE, represented by the model parameter \(\gamma_1\). What if we were instead interested in the person-level average effect? This estimand does not correspond to any input parameter in our data generating process. Instead, it is defined implicitly by a combination of other parameters. In order to compute performance characteristics such as bias and RMSE, we would need to first calculate the target estimand based on the inputs to the data-generating processes. There are at least three possible ways to accomplish this: mathematical theory, generating a massive dataset, or tracking the estimand during simulation.

Mathematical theory. One approach is to use mathematical distribution theory to compute the implied parameter.

Our target parameter will be some function of the parameters and random variables in the data-generating process, and it may be possible to evaluate that function algebraically or numerically (i.e., using numerical integration functions such as integrate()).

This can be a very worthwhile exercise if it provides insights into the relationship between the target parameter and the inputs of the data-generating process.

However, this approach requires knowledge of distribution theory, and it can get quite complicated and technical.17

Massive dataset. Another approach is to generate a massive dataset—one so large that it can stand in for the entire data-generating model—and then simply calculate the target parameter of interest in this massive dataset. In the cluster-RCT example, we can apply this strategy by generating data from a very large number of clusters and then simply calculating the true person-average effect across all generated clusters. If the dataset is big enough, then the uncertainty in this estimate will be negligible compared to the uncertainty in our simulation.

We implement this approach as follows, generating a dataset with 100,000 clusters:

dat <- gen_cluster_RCT(

n_bar = 30, J = 100000,

gamma_1 = 0.3, gamma_2 = 0.5,

sigma2_u = 0.20, sigma2_e = 0.80,

alpha = 0.5

)

ATE_person <- mean( dat$Yobs[dat$Z==1] ) - mean( dat$Yobs[dat$Z==0] )Our extremely precise estimate of the person-average effect is 0.342, which is consistent with what we would expect given the bias we saw earlier for the linear model.

If we recalculate performance measures for all of our estimators with respect to the ATE_person estimand, the bias and RMSE of our estimators will shift, although the standard errors will stay the same.

performance_person_ATE <-

runs %>%

group_by( method ) %>%

summarise(

bias = mean( ATE_hat ) - ATE_person,

SE = sd( ATE_hat ),

RMSE = sqrt( mean( (ATE_hat - ATE_person)^2 ) )

) %>%

mutate( per_RMSE = RMSE / RMSE[method=="LR"] )

performance_person_ATE## # A tibble: 3 × 5

## method bias SE RMSE per_RMSE

## <chr> <dbl> <dbl> <dbl> <dbl>

## 1 Agg -0.0424 0.214 0.218 0.959

## 2 LR 0.0402 0.224 0.227 1

## 3 MLM -0.0300 0.213 0.215 0.947For the person-weighted estimand, the aggregation estimator and multilevel model are biased but the linear regression estimator is unbiased. However, the aggregation estimator and multilevel model estimator still have smaller standard errors than the linear regression estimator. RMSE now captures the trade-off between increased bias and reduced variance. Overall, aggregation and multilevel modeling have an RMSE that is around 5% smaller than linear regression. The greater precision is worth the bias price.

Track during the simulation. A further approach for calculating ATE_person would be to record the true person average effect of the dataset with each simulation iteration, and then average the sample-specific parameters at the end.

The overall average of the dataset-specific ATE_person parameters corresponds to the population person-level ATE.

This approach is equivalent to generating a single massive dataset—we just generate it piece by piece.

To implement this approach, we would need to modify the data-generating function gen_cluster_RCT() to track the additional information.

For instance, we might calculate

and then include tx_effect along with Yobs and Z as a column in our dataset.

This approach is quite similar to directly calculating potential outcomes, as discussed in Chapter 21.

After modifying the data-generating function, we will also need to modify the analysis function(s) to record the sample-specific treatment effect parameter. We might have, for example:

analyze_data = function( dat ) {

MLM <- analysis_MLM( dat )

LR <- analysis_OLS( dat )

Agg <- analysis_agg( dat )

res <- bind_rows(

MLM = MLM, LR = LR, Agg = Agg,

.id = "method"

)

res$ATE_person <- mean( dat$tx_effect )

return( res )

}Now when we run our simulation, we will have a column corresponding to the true person-level average treatment effect for each dataset. We could then take the average of these value across replications to estimate the true person average treatment effect in the population, and then use this as the target parameter for performance calculations.

An estimand not represented by any single input parameter is more difficult to work with than one that corresponds directly to an input parameter. That said, it might be a more important estimand than one represented directly with an input parameter. As the above shows, it is quite feasible to examine such estimands with a bit of forethought and careful programming. The key is to be clear about what you are trying to estimate because the performance of an estimator depends on the estimand against which it is compared.

9.7 Uncertainty in Performance Estimates (the Monte Carlo Standard Error)

The performance measures we have described are all defined in terms of an infinite number of replications of the data-generating process. Of course, simulations will only involve a finite set of replications, based on which we estimate the measures. These estimates involve some Monte Carlo error because they are based on a limited number of replications. If we re-ran the simulation (with a different seed), our estimates would change. We can quantify how much they might change by assessing Monte Carlo error.

To account for Monte Carlo error, we can think of our simulation results as a sample from a population. Each replication is independent drawn from the population of the sampling distribution. Once we frame the problem in these terms, we can apply standard statistical techniques to calculate standard errors. We call these standard errors Monte Carlo Simulation Errors, or MCSEs. For most performance measures, closed-form expressions for their MCSEs are available. If no closed-form expressions are available, we can still estimate MCSEs using techniques such as the jackknife or bootstrap.

9.7.1 Conventional measures for point estimators

For the performances measures that we have described for evaluating point estimators, Monte Carlo standard errors can be calculated using conventional formulas.18 Recall that we have a point estimator \(T\) of a target parameter \(\theta\), and we calculate the mean of the estimator \(\bar{T}\) and its sample standard deviation \(S_T\) across \(R\) replications of the simulation process. In addition, we will need to calculate the standardized skewness and kurtosis of \(T\) as

\[ \begin{aligned} \text{Skewness (standardized):} & &g_T &= \frac{1}{R S_T^3}\sum_{r=1}^R \left(T_r - \bar{T}\right)^3 \\ \text{Kurtosis (standardized):} & &k_T &= \frac{1}{R S_T^4} \sum_{r=1}^R \left(T_r - \bar{T}\right)^4. \end{aligned} \tag{9.24} \]

The bias of \(T\) is estimated as \(\bar{T} - \theta\), so the MCSE for bias is equal to the MCSE of \(\bar{T}\) because \(\theta\) is fixed. It can be estimated as \[ MCSE\left(\widehat{\Bias}(T)\right) = \sqrt{\frac{S_T^2}{R}}. \tag{9.25} \] The sampling variance of \(T\) is estimated as \(S_T^2\), with a MCSE of \[ MCSE\left(\widehat{\Var}(T)\right) = S_T^2 \sqrt{\frac{k_T - 1}{R}}. \tag{9.26} \] The empirical standard error (the square root of the sampling variance) is estimated as \(S_T\). Using a delta method approximation,19 the MCSE of \(S_T\) is approximately \[ MCSE\left(S_T\right) \approx \frac{S_T}{2}\sqrt{\frac{k_T - 1}{R}}. \tag{9.27} \]

We estimate RMSE using Equation (9.5), which can also be written as \[ \widehat{\RMSE}(T) = \sqrt{(\bar{T} - \theta)^2 + \frac{R - 1}{R} S_T^2}. \] The MCSE of the estimated mean squared error (the square of RMSE) is \[ MCSE( \widehat{MSE} ) = \sqrt{\frac{1}{R}\left[S_T^4 (k_T - 1) + 4 S_T^3 g_T\left(\bar{T} - \theta\right) + 4 S_T^2 \left(\bar{T} - \theta\right)^2\right]}. \tag{9.28} \] Again following a delta method approximation, a MCSE for the RMSE is \[ MCSE( \widehat{RMSE} ) = \frac{\sqrt{\frac{1}{R}\left[S_T^4 (k_T - 1) + 4 S_T^3 g_T\left(\bar{T} - \theta\right) + 4 S_T^2 \left(\bar{T} - \theta\right)^2\right]}}{2 \times \widehat{RMSE}}. \tag{9.29} \]

Section 9.5 discussed circumstances where we might prefer to calculate performance measures in relative rather than absolute terms. For measures that are calculated by dividing a raw measure by the known target parameter, the MCSE for the relative measure is simply the MCSE for the raw measure divided by the target parameter. For instance, the MCSE of relative bias \(\bar{T} / \theta\) is \[ MCSE\left( \frac{\bar{T}}{\theta} \right) = \frac{1}{\theta} MCSE(\bar{T}) = \frac{S_T}{\theta \sqrt{R}}. \tag{9.30} \] MCSEs for relative variance and relative RMSE follow similarly.

9.7.2 MCSEs for robust measures for point estimators

In Section 9.1.2 we described several alternative performance measures for evaluating point estimators, which are less commonly used but are more robust to outliers compared to measures such as bias and variance. MCSEs for these less conventional measures can be obtained using results from the theory of robust statistics (Hettmansperger and McKean 2010; Maronna, Martin, and Yohai 2006).

McKean and Schrader (1984) proposed a standard error estimator for the sample median from a continuous but not necessarily normal distribution, derived from a non-parametric confidence interval for the sample median. We use their approach to compute a MCSE for \(M_T\), the sample median of \(T\). Let \(c = \left\lceil(R + 1) / 2 - 1.96 \times \sqrt{R/4}\right\rfloor\), where the inner expression is rounded to the nearest integer. Then \[ MCSE\left(M_T\right) = \frac{T_{(R + 1 - c)} - T_{(c)}}{2 \times 1.96}. \tag{9.31} \] A Monte Carlo standard error for the median absolute deviation can be computed following the same approach, but substituting the order statistics of \(E_r = | T_r - \theta|\) in place of those for \(T_r\).

Trimmed mean bias with trimming proportion \(p\) is calculated by taking the mean of the the middle \((1 - 2p) \times R\) observations, which we have denoted as \(\tilde{T}_{\{p\}}\). A MCSE for the trimmed mean (and for the trimmed mean bias) is \[ MCSE\left(\tilde{T}_{\{p\}}\right) = \sqrt{\frac{U_p}{R}}, \tag{9.32} \] where, taking from (Maronna, Martin, and Yohai 2006, Eq. 2.85), \[ U_p = \frac{1}{(1 - 2p)R}\left( pR\left(T_{(pR)} - \tilde{T}_{\{p\}}\right)^2 + pR\left(T_{((1-p)R + 1)} - \tilde{T}_{\{p\}}\right)^2 + \sum_{r=pR + 1}^{(1 - p)R} \left(T_{(r)} - \tilde{T}_{\{p\}}\right)^2 \right) . \] The additional uncertainty due to the thresholds is ignorable

Performance measures based on winsorization include winsorized bias, winsorized standard error, and winsorized RMSE. MCSEs for these measures can be computed using the same formuals as for the conventional measures of bias, empirical standard error, and RMSE, but using sample moments of \(\hat{X}_r\) in place of the sample moments of \(T_r\).

9.7.3 MCSE for Confidence Intervals and Hypothesis Tests

Performance measures for confidence intervals and hypothesis tests are simple compared to those we have described for point and variance estimators. For evaluating hypothesis tests, the main measure is the rejection rate of the test, which is a proportion estimated as \(r_\alpha\) (Equation (9.21)). The MCSE for the estimated rejection rate is \[ MCSE(r_\alpha) = \sqrt{\frac{r_\alpha ( 1 - r_\alpha)}{R}}. \tag{9.33} \] This MCSE uses the estimated rejection rate to approximate its Monte Carlo error. When evaluating the validity of a test, we might expect the rejection rate to be fairly close to the nominal \(\alpha\) level, in which case we could compute a MCSE using \(\alpha\) in place of \(r_\alpha\), taking \(\sqrt{\alpha(1 - \alpha) / R}\). When evaluating power, we will not usually know the neighborhood of the rejection rate in advance of the simulation. However, a conservative upper bound on the MCSE can be derived by observing that MCSE is maximized when \(r_\alpha = \frac{1}{2}\), and so \[ MCSE(r_\alpha) \leq \frac{1}{2 \sqrt{R}}. \]

When evaluating confidence interval performance, we focus on coverage rates and expected widths. MCSEs for the estimated coverage rate work similarly to those for rejection rates. If the coverage rate is expected to be in the neighborhood of the intended coverage level \(\beta\), then we can approximate the MCSE as \[ MCSE(\widehat{\text{Coverage}}(A,B)) = \sqrt{\frac{\beta(1 - \beta)}{R}}. \tag{9.34} \] Alternately, Equation (9.34) could be computed using the estimated coverage rate \(\widehat{\text{Coverage}}(A,B)\) in place of \(\beta\).

Finally, the expected confidence interval width is estimated as \(\bar{W}\), with MCSE \[ MCSE(\bar{W}) = \sqrt{\frac{S_W^2}{R}}, \tag{9.35} \] where \(S_W^2\) is the sample variance of \(W_1,...,W_R\), the widths of the confidence interval from each replication.

9.7.4 Using the Jackknife to obtain MCSEs (for relative variance estimators)

Estimating the MCSE of relative performance measures for variance estimators is complicated by the appearance of an estimated quantity in the denominator of the ratio. For instance, the relative bias of \(V\) is estimated as the ratio \(\bar{V} / S_T^2\), and both the numerator and denominator are estimated quantities that will include some Monte Carlo error. To properly account for the Monte Carlo uncertainty of the ratio, one possibility is to use formulas for the standard errors of ratio estimators. Alternately, we can use general uncertainty approximation techniques such as the jackknife or bootstrap (Boos and Osborne 2015). The jackknife involves calculating a statistic of interest repeatedly, each time excluding one observation from the calculation. The variance of this set of one-left-out statistics usually serves as a reasonable approximation to the actual sampling variance of the statistic calculated from the full sample.